Who Wins in the AI Era of Personal Computing? Integrations, Multimodality, and Open Source

Last month’s announcements and demos from OpenAI and Google feels like a step change in latency, cost and user experience, ushering in a new era for personal computing products.

I won't go too much into the specific products and demos (just a couple) that were shown, as I'm sure folks are already aware of those. The more interesting question is: Which companies are making the right moves and have a competitive advantage to win over future consumers?

OpenAI’s challenges in the consumer market

OpenAI's recent announcements seemed to be more about the consumer market than advancing the capabilities of its frontier model. GPT-4o ("omni") is capable of reasoning across speech, text, and vision, providing a multimodal upgrade to the GPT-4 model. While not surprising to many, no major updates have been made to the capabilities of its foundation model, i.e., we do not yet have a GPT-5. However, costs are down 50% from its previous most advanced model, GPT-4 Turbo, and the latency for voice has been significantly reduced by using a single model trained in all three modalities.

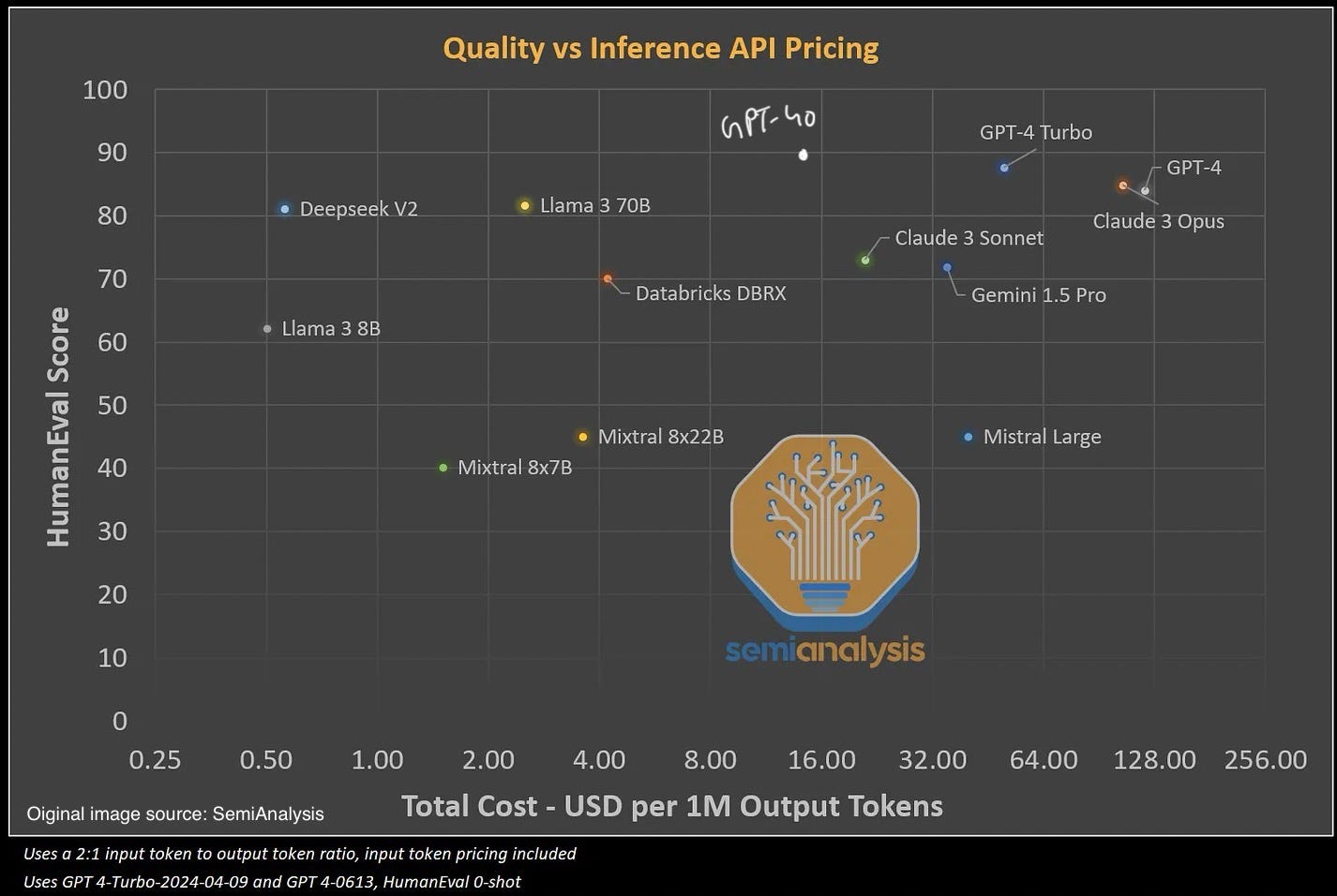

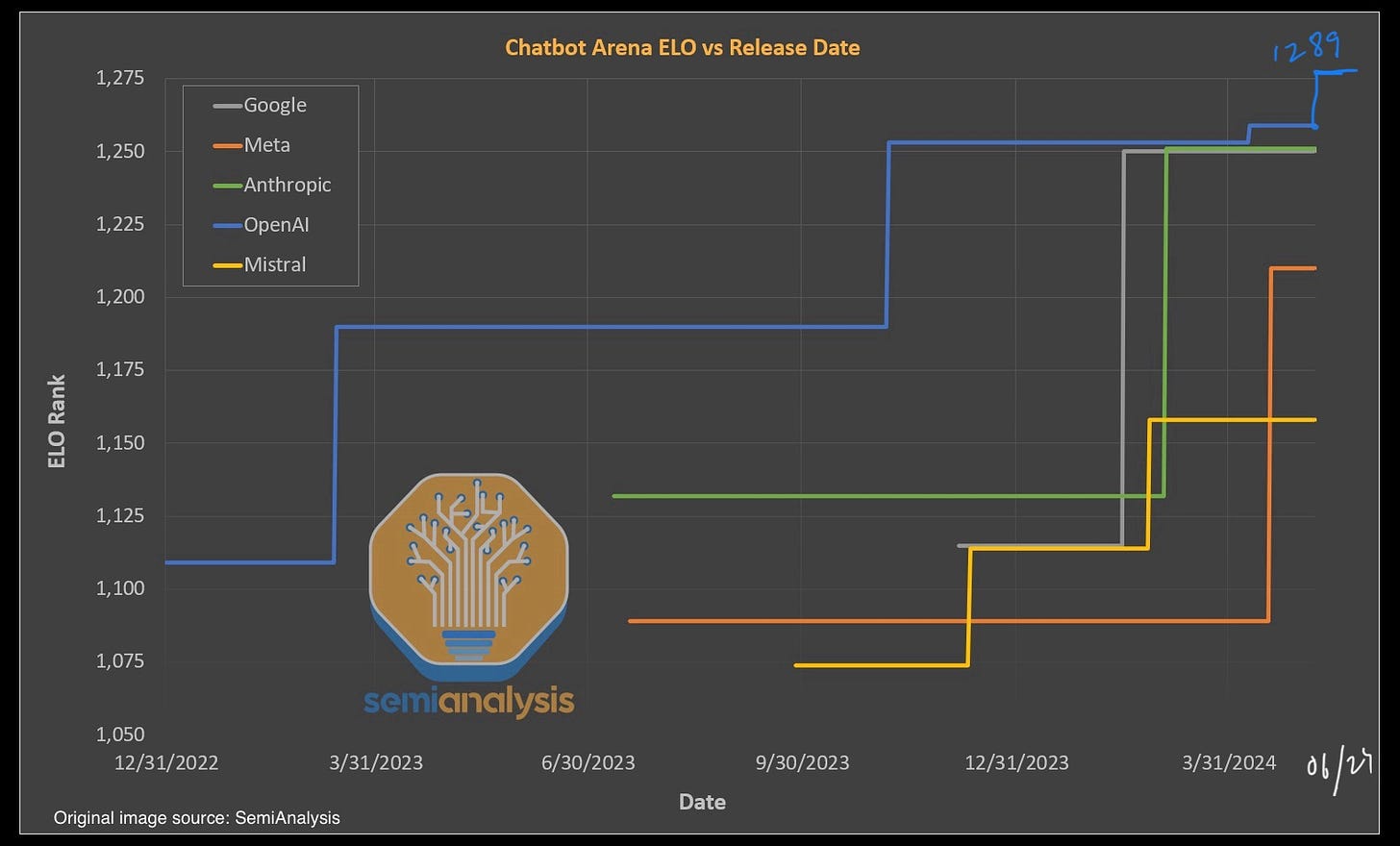

It's no surprise that the consumer market is attractive to OpenAI, as its current subscription business model seems harder to sustain in the long run as more open source options commoditise the LLM models themselves. The cost of inference is coming down, and most open source models, while not catching up to GPT-4 yet, are "good enough" for most consumer use cases (summarisation, text generation, image generation etc.). Let's look at how some of the other models on the market compare to OpenAI's GPT-4 and 4o:

But as I said, the consumer space is attractive - the potential to build a high-margin advertising business by aggregating user attention as evidenced by Google and Facebook. But the key problem with OpenAI is the lack of distribution and network effects. It does not come pre-installed on the over 3 billion Android phones or the 1.5 billion iPhones. Also, my use of ChatGPT does not make it more useful or attractive to the next marginal user (no network effects).

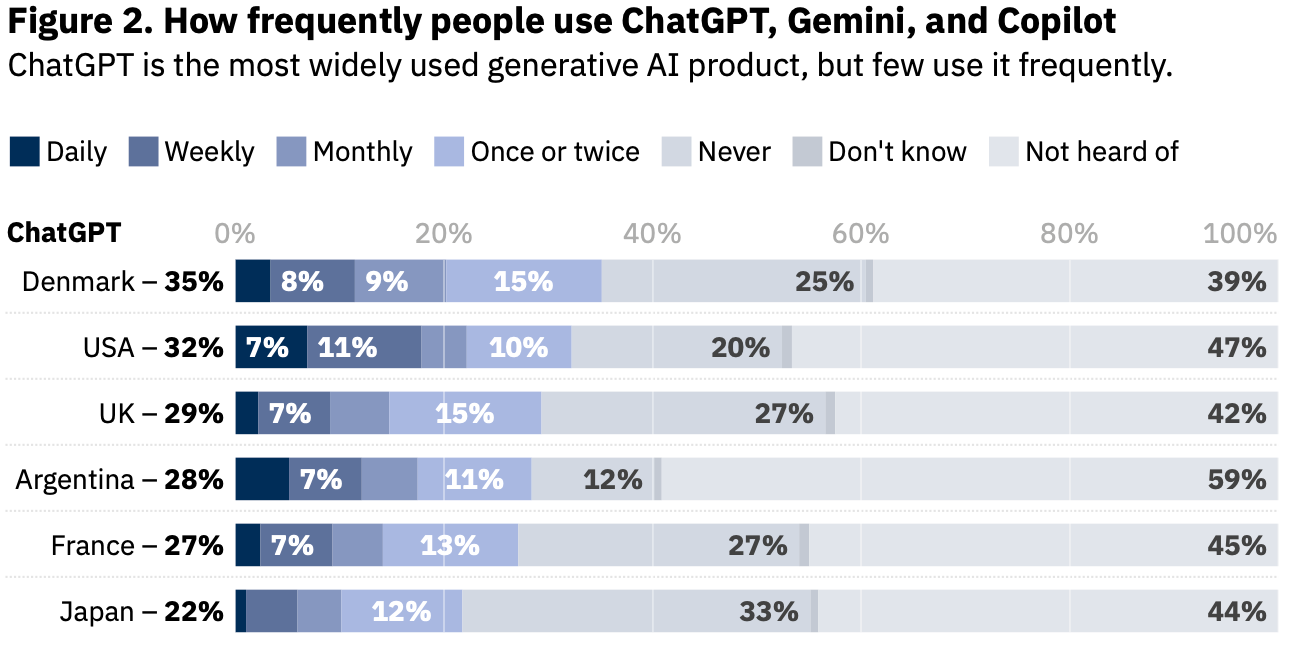

source: Reuters Institute data on generative AI adoption and attitudes (May 2024)

The data above shows that most people in the developed world do not use it frequently and a large proportion have never even heard of it. The data is similar for other similar offerings such as Google Gemini and Microsoft Copilot.

To overcome its distribution challenge and retain its subscription customers, it needs to innovate faster than its competitors to built better user experiences and foundational models. Hence, more capital expenditures to train larger models, higher marginal costs for serving frontier models and larger development costs to build these products. However, this is not a problem for OpenAI due to its unique partnership with Microsoft, where the infrastructure for training and inference is provided by Microsoft, which then translates into profits in their cloud division (as the exclusive cloud provider). Microsoft also retains the IP developed by OpenAI (at least until AGI is developed).

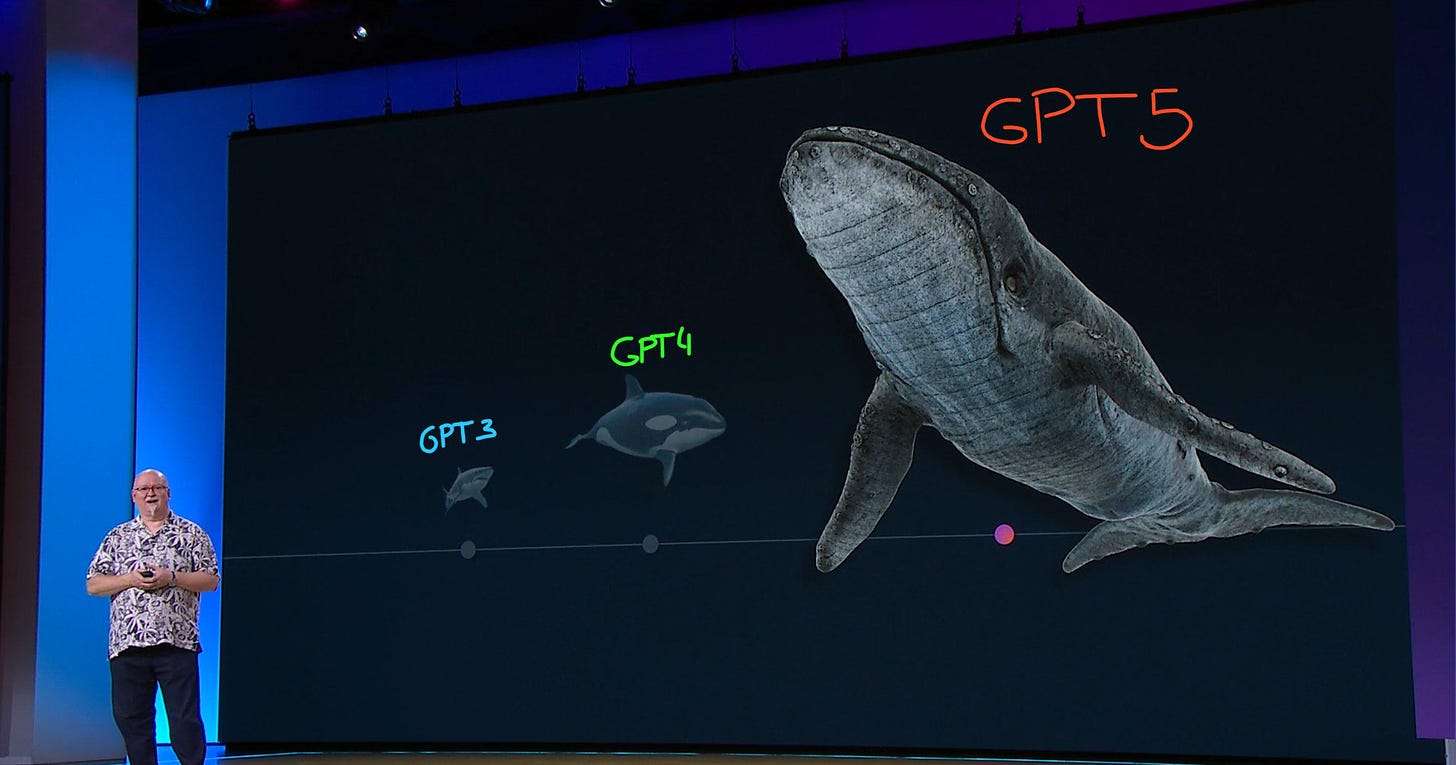

Source: Microsoft build events: CTO Kevin Scott comparing the size difference of a shark (GPT-4) to an orca (GPT-5) to show that scaling laws still hold

Despite its partnerships with Microsoft for its Co-pilot products and a potential deal with Apple, not all user traffic will be served through OpenAI APIs as more both companies are working on their on-device LLMs and larger foundational models. Microsoft is reportedly developing a powerful AI model called MAI-1. This project is said to be led by Mustafa Suleyman, a former Google AI executive who previously served as the CEO of the AI startup Inflection. Microsoft acquired the majority of Inflection's staff and its intellectual property for $650 million. Apple too is working on its own multimodel model. Apple, like Microsoft in 2018-19, recognises that they are behind in AI and "buy" rather than "build" seems like a better strategy, hence the deal with OpenAI. However, AI is too critical to Apple's user experience (with its focus on privacy) and I doubt they will outsource it in the long run, as they did with search and Google.

As the use of LLM becomes more commoditised (or, integrated for larger workloads), OpenAI's position in the stack is vulnerable, and its current business model makes it difficult to build a strong moat, raising questions about whether it can sustain its growth over the long term.

Google’s opportunities for integration

The Google I/O event showed promising integrations of LLMs with their existing ecosystem - Gmail, Youtube, Workspace, Android. Despite recent hiccups in execution, Google has an integrated model (TPUs→Gemini models→Android→Google Apps→Pixel) that provides a cost advantage and an optimised infrastructure when the marginal cost of serving GenAI models is currently non-trivial, especially while serving billions of users at a time.

Integrated models win when the technologies are at its infancy and optimisations are worth the incremental improvements in user experience and cost savings. This theory was originally proposed in the seminal paper Disruption, Disintegration and the dissipation of differentiability from Clayton Christensen, and best explained by Ben Thompson in this Stratechery blog:

Briefly, an integrated approach wins at the beginning of a new market, because it produces a superior product that customers are willing to pay for. However, as a product category matures, even modular products become “good enough” – customers may know that the integrated product has superior features or specs, but they aren’t willing to pay more, and thus the low-priced providers, who build a product from parts with prices ground down by competition, come to own the market.

Until LLMS become fully commoditised and inference costs approach zero, we can expect more integrated approaches across the stack to dominate the market. Even as modularisation increases lower in the stack (Moore’s law), top-level integration will continue to be crucial for providing a better user experience and privacy.

This point was clearly made in several demos like this one:

And, the below demo using its multimodal capabilities:

These are great examples of how integrating GenAI into existing workflows, making it context aware in the moment without switching applications, makes it a compelling user experience. By integrating with your emails, documents, photos, etc., RAG (Retrieval Augmented Generation) becomes more effective, takes full advantage of its larger context window, and will support more agentic and multi-step reasoning capabilities as they evolve.

Another highlight of the event was Project Astro, which lets you use your phone to interact with your environment. However, similar to the OpenAI demo, you have to move your phone around, which doesn't make for a great user experience. But the most interesting part of the demo was a prototype pair of glasses that appeared for just a second and made the rest of the interactive demo much more compelling.

Google had introduced similar form factor in its integration with Google apps before, with its Google Glass project in 2013. While retaining the form factor of traditional eyewear, Google Glass had a small prism-like display strategically positioned above the user's right eye, allowing digital information to be seamlessly superimposed on the real world, including features like taking photos and videos, navigating with GPS, checking email and messages, and using various Google services through voice commands and touchpad gestures. However, due to a lack of clear use cases, privacy concerns, and a lack of an app ecosystem, it was discontinued in 2015.

I think Google had the right vision 10 years ago, they just did not have the applications and technology to make it a successful consumer product. Following the recent failures of products like the Humane Pin and Rabbit R1, I believe that glasses or headsets with multimodal AI capabilities have the potential to become the next successful computing platform in the consumer market, succeeding smartphones. Last week, Google’s partnership with Magic Leap, wearables startup that provides head-mounted augmented reality displays, shows a new intent from Google to explore this space again.

Meta’s open source strategy and Mixed Reality ambitions

Although the AI headlines have been dominated by OpenAI, Microsoft, and Google, Meta has strategically made the right bets to capture the consumer market as the Generative AI market matures.

They have already built the infrastructure to serve probabilistic ad models at scale post-ATT, and this infrastructure spending and hardware optimizations will inevitably help them in serving their AI services which use LLama 3 as the underlying LLM. Meta reports its financials for "Family of Apps" i.e. Facebook, Instagram, etc. and "Reality Labs" i.e. their AR/VR hardware and software BU, but strategically they serve each other, with Reality Labs hardware expected to be the next platform to host Meta's AI models and apps/services.

Mark Zuckerberg in Meta’s Q1 2024 Earnings Call Transcript:

We're building a number of different AI services, from Meta AI, our AI assistant that you can ask any question across our apps and glasses, to creator AIs that help creators engage their communities and that fans can interact with, to business AIs that we think every business eventually on our platform will use to help customers buy things and get customer support

From my point of view Meta has the most effective strategy to win the AI race in the consumer marker.

It already has strong network effects in its current apps where AI services can be integrated, helping to bring new experiences to those apps, such as creating animations from still images using Generative AI to drive engagement. It is able to integrate its LLM (Llama 3) with its custom silicon made especially for its workloads to drive cost efficiencies while serving its ~3 billion users. It is integrated where integration matters the most right now and aims to modularise the upstream hardware part of the stack to its advantage.

To that effect, it is betting on open source to drive adoption of its AI services and commoditise its compliments. Meta released Llama 3, state of the art LLM for its size (8B and 70B), and Meta Horizon OS, an open source OS for mixed reality headsets. Meta's goal is not to join the race to AGI and is not going the open source route to compete with OpenAI (like Elon Musk open sourcing Grok). It needs people to create content that will be consumed in the Meta ecosystem, and the more freely available the tools to create content, the better it is for Meta. So it is in Meta's favour if LLMs become commoditised (think of people creating content freely and cheaply with tools like Sora).

Furthermore, it sees tremendous value in integrating upwards in the stack to an operating system like Google did with Android and commodifying its compliments (hardware) with Meta Horizon OS. By releasing an open source OS for Mixed reality headsets, it can distribute a free operating system to commodity manufacturers and standardise the market around its services.

From Joel Spolsky’s Strategy Letter V:

All else being equal, demand for a product increases when the prices of its complements decrease….

When IBM licensed the operating system PC-DOS from Microsoft, Microsoft was very careful not to sell an exclusive license. This made it possible for Microsoft to license the same thing to Compaq and the other hundreds of OEMs who had legally cloned the IBM PC using IBM’s own documentation. Microsoft’s goal was to commoditize the PC market. Very soon the PC itself was basically a commodity, with ever decreasing prices, consistently increasing power, and fierce margins that make it extremely hard to make a profit. The low prices, of course, increase demand. Increased demand for PCs meant increased demand for their complement, MS-DOS. All else being equal, the greater the demand for a product, the more money it makes for you.And that’s why Bill Gates can buy Sweden and you can’t.

This was the same strategy Google used with Android. It may seem counterintuitive to think about why a company would open source technology that cost millions of dollars to develop. But the reason is simple - you want to commoditise everything between you and your customers. This is what Google did to win the smartphone market (by market share) and create a formidable moat with Android. Bill Gurley writes in 2011:

Android, as well as Chrome and Chrome OS for that matter, are not “products” in the classic business sense. They have no plan to become their own “economic castles.” Rather they are very expensive and very aggressive “moats,” funded by the height and magnitude of Google’s castle. Google’s aim is defensive not offensive. They are not trying to make a profit on Android or Chrome. They want to take any layer that lives between themselves and the consumer and make it free (or even less than free).

Because these layers are basically software products with no variable costs, this is a very viable defensive strategy. In essence, they are not just building a moat; Google is also scorching the earth for 250 miles around the outside of the castle to ensure no one can approach it. And best I can tell, they are doing a damn good job of it.

Open sourcing technology often does not have a goal in altruism. Like Google, Meta wants to own the platform where it deploys its apps and not depend on Apple and Google for distribution (especially after ATT). Here is Mark Zuckerberg talking about owning the next computing platform in an internal email in 2015:

Our vision is that VR / AR will be the next major computing platform after mobile in about 10 years. It can be even more ubiquitous than mobile – especially once we reach AR – since you can always have it on. It’s more natural than mobile since it uses our normal human visual and gestural systems. It can even be more economical, because once you have a good VR / AR system, you no longer need to buy phones or TV’s or many other physical objects – they can just become apps in a digital store.

…The strategic goal is clearest. We are vulnerable on mobile to Google and Apple because they make major mobile platforms. We would like a stronger strategic position in the next wave of computing. We can achieve this only by building both a major platform as well as key apps.

And, more from Mark’s comments in Meta’s Q1 2024 Earnings Call Transcript:

Glasses are the ideal device for an AI assistant because you can let them see what you see and hear what you hear, so they have full context on what's going on around you as they help you with whatever you're trying to do. Our launch this week of Meta AI with Vision on the glasses is a good example where you can now ask questions about things you're looking at.

…As the ecosystem grows, I think there will be sufficient diversity in how people use mixed reality that there will be demand for more designs than we'll be able to build. For example, a work-focused headset may be slightly less designed for motion but may want to be lighter by connecting to your laptop. A fitness-focused headset may be lighter with sweat-wicking materials. An entertainment-focused headset may prioritize the highest-resolution displays over everything else.A gaming-focused headset may prioritize peripherals and haptics, or a device that comes with Xbox controllers and a Game Pass subscription out of the box.

Mark Zuckerberg's decade-long strategy seems to be coming to fruition with advances in multimodal LLMs. Mixed reality seems poised to become the ideal platform for consumers to use generative AI technology. Meta, with its open source LLMs, the right integrations and right business models, is strategically positioned to win the next platform war for AI (or, it might share the market with Apple’s premium segment of Vision Pro users, in which case it looks exactly like the smartphone market). The integration of Generative AI with Mixed Reality can create immersive and interactive experiences that go beyond what traditional screens can offer.

While I'm not sure if mainstream adoption of mixed reality headsets will happen to the extent of the smartphone, with further advances in generative AI technology and spatial intelligence, it seems like the right form factor to bring new experiences to the consumer market.

Regarding Apple, I am looking forward to what it has to show in the WWDC 2024 next week.